Introduction

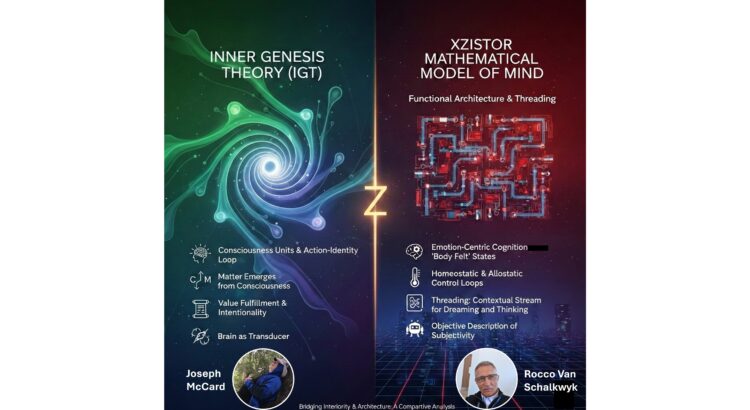

Joseph McCard developed the Inner Genesis Theory (IGT). He is interested in advancing consciousness studies through IGT. He had some interesting questions about the Xzistor brain model for which I have provided answers below.

Link: Joseph McCard’s Substack

Before providing answers to Joseph’s questions, I would like to offer this comparative study I have asked Gemini 3.1. Pro to perform in order for me to understand the Inner Genesis Theory (IGT) paradigm better and how it might relate (or not) to the Xzistor Mathematical Model of Mind.

Bridging Interiority and Architecture: A Comparative Analysis of Inner Genesis Theory and the Xzistor Mathematical Model of Mind

Below are my answers to Joseph’s questions:

QUESTION 1: Does the model account for meaning, narrative, and self-reflection, or is it strictly operational?

The Xzistor model accounts for these through its “threading” mechanism and association-based learning.

- Meaning: In this model, “meaning” is not just a statistical correlation but is derived from the emotional tagging of sensory cues. When an agent learns, it associates cues with emotional states (homeostatic/allostatic valence), giving those cues “meaning” relative to the agent’s survival and well-being.

- Narrative and Self-Reflection: The model enables “synthetic mind wandering” and thinking via a “contextually-modulated stream of recollected associations”. This “threading” allows an agent to “re-live” or “pre-live” experiences, including re-evocable emotions, effectively creating an internal narrative and a form of self-reflection where the agent can reason about its own potential future or past states.

- Not strictly operational: While it uses control theory, it is not “strictly operational” in a reactive sense; it possesses motivated autonomy and internal emotional states that drive decision-making towards behavior even in the absence of external stimuli.

QUESTION 2: How does Xzistor address first-person subjective fields rather than merely correlates?

The Xzistor model addresses the “hard problem” of consciousness by asserting that subjective feelings are the functional outcome of the model’s logic.

- It defines emotions as somatosensory representations generated by homeostatic and allostatic control loops.

- By modeling these states as “felt” internal representations located in the agent’s somatotopic ‘Body Map’, the model creates embodied emotional awareness. The model’s developer argues that it doesn’t just provide “correlates” of feelings but explains the mechanism of how internal states become “felt” by the agent. How these subjective states are created, through homeostatic and allostatic control loop variables represented as somatosensory emotions, leading to embodied emotional awareness, is explained in this talk by Rocco Van Schalkwyk (How the Xzistor Mathematical Model of Mind creates Machine Emotions).

[Note that Rocco does not claim that the Xzistor model solves the Hard Problem of Consciousness, rather that the model explains subjectivity in an objective way. This is further elaborated on later in this post.]

QESTION 3: What is their stance on qualia, and do they consider it more than functional correlates?

The Xzistor model treats qualia as the combinatorial generation of feelings.

- It proposes that the near-infinite variety of human experience comes from the combination of a finite set of innate emotion homeostats (e.g., thirst, hunger, pain, stress).

- A single Xzistor robot with 20 homeostats can experience 10^42 unique emotional valence combinations.

- Qualia are seen as functional outcomes rather than just correlates, as they are the primary drivers of the agent’s behavior and learning.

QUESTION 4: Do they propose any empirical tests that transcend behavior and demonstrate subjective phenomenology?

The model proposes several empirical tests and has been validated through “proof-of-concept” implementations.

- Agent Implementations: The model has been tested in physical robots (e.g., “Troopy”) and virtual agents (e.g., “Simmy”) that demonstrate emergent human-like behaviors such as reactions to emotions and problem-solving. By interrupting the running Xzistor robot brain, or by observing the dashboards showing internal states, it becomes clear that the robot is acting based on it’s own internal somatosensory emotions communicating with the model’s executive part via representations. The executive part only ever receives somatotopic representations – akin to human interoception – to base internally motivated behaviour on. This means information is passed to the executive part as valence-carrying ‘feelings’, not as raw signals.

- Neural Correlates: The model’s functional structures (like the thirst homeostat) have been cross-referenced with biological neural networks to prove its biological plausibility.

- Systematic Testing: Recent research on artificial agent language development proposes a “systematic set of tests” to prove the validity of using artificial emotions for language learning. See this paper on ResearchGate: Artificial Agent Language Development based on the Xzistor Mathematical Model of Mind.

All volitional behaviours generated by the Xzistor model originate from subjective states experienced by the agent. How these subjective states are created, through homeostatic and allostatic control loop variables represented as somatosensory emotions, and leading to embodied emotional awareness, is explained in this talk by Rocco Van Schalkwyk (How the Xzistor Mathematical Model of Mind creates Machine Emotions). Note that the Xzistor brain model never claims to know ‘what it will feel like‘ for an agent, but rather that ‘it will feel something‘. Any researcher claiming it is possible for a human to know ‘what any subjective state will feel like‘ for another human (or animal or agent for that matter), will have a severe burden of proof on his/her hands to show that:

1.) He/she can interface (connect) and communicate directly with the other party’s brain (which could be biological or synthetic).

2.) Use exactly the same type of information processing and information transfer protocols.

3.) Have exactly the same brain infrastructure to assess the incoming subjective state information i.e. an identical executive part.

4.) There is not even the slightest (miniscule) difference in the brain structures between the observed party and the observer (including the effects of past learning and current emotions). This requires that the brain of the observed party and that of the observer are identical during the shared subjective experience.

In the absence of being able to prove the above, no claim can be made that one cognitive entity can exactly ‘know what it feels like‘ for another cognitive entity. By considering the above 4 requirements, Rocco Van Schalkwyk argues that the Hard Problem of Consciousness is stating more of a Physical Insolubility than a Hard Problem. To those who understand the above challenges, the Hard Problem of Consciousness holds very little value. It is unfortunate that David Chalmers, and many of his followers, did not understand that what was being proposed was a simple Physical Insolubility.

QUESTION 5: From an IGT perspective, http://Xzistor.com and its associated model are interesting as formal, functional experiments in cognitive architecture and robotics, offering some engaging critiques of mainstream AI/neuroscience. However, the model’s claims about subjective experience and true general intelligence should be interpreted as hypotheses grounded in functional analogy, not final truths about the nature of consciousness or mind. They provide an entry point for layered dialogue, not a definitive metaphysical account.

The Xzistor Mathematical Model of Mind has always been clear that it provides a ‘principal model’ of the mind, not a precise description of the biological brain and the final truth about its neural states. As such it is also not claiming to produce true general intelligence, merely how generalisation (inductive inference) can be built into and demonstrated as part of a functional brain model. However, the explanatory power of a functional model of the brain must not be underestimated. Engineers designing complex systems often start with a simple functional block diagram to portray the essense of what the system must achieve and how it will work. The ‘means’ of how those functions are achieved are then chosen later, and less important to the final outcome than the functional logic. Designing a car for example, an engineer might start off by simply specificying that it will have 4 wheels that can rotate. The function in this case might be: Allow horisontal movement across level terrain. The exact type of wheel is not what will make the car achieve its goals. More important is how this function and all the other functions are organised to result in the successful operation of the car i.e. the combination and integration of functions for providing power, transmission, directional control, suspension, breaking, etc. It is the functional organisation of this car that has the explanatory power, not the means by which functions are achieved. Many brain scientists look for explanatory power within the means of the brain (biological detail) and many philosophers reject functional explanations of the brain – not realising that every complex control system (alive or inanimate) can essentially be described by the functions it performs.

QUESTION 6: The Xzistor Mathematical Model of Mind appears to be an impressive motivational control architecture that models cognition, affective modulation, and narrative processing. However, it remains a model of how systems organize behavior, not a demonstration of how subjective interiority comes into existence. Functional feeling, emotional variables, and combinatorial homeostats can explain decision dynamics, but they do not explain why anything is experienced at all. IGT locates consciousness not in complex control logic, but in a primitive self-sustaining action–identity loop, an interior process that exists for itself.

If there is something noteworthy that the Xzistor brain model achieves, it is to demonstrate how subjective interiority comes into existence – how it is ‘principally possible’, not precisely how it works in the wet brain. Again, listen to this talk by Rocco Van Schalkwyk (How the Xzistor Mathematical Model of Mind creates Machine Emotions). ‘Interiority’ must comprise of something that is not magic – it requires some functional organisation rooted in structure. The Xzistor model proposes how this might work by viewing the brain as a multivariable adaptive control system, where much of what can be described as ‘interiority’ happens under the term ‘adaptive’ (e.g. continual learning, world models, prediction, emotional bias, corrective actions, problem solving, creativity, etc.). Some of the explanations offered by the IGT regarding consciousness is difficult to delineate from control logic. It is stated that IGT locates consciousness not in complex control logic, but in a primitive self-sustaining action–identity loop, an interior process that exists for itself.

Certain terms used might cause confusion in the minds of some:

1.) ‘primitive’ – in origin or simplicity?

2.) ‘self-sustaining’ – this seems to suggest that some sort of self-monitoring and self-correcting control is required, much like Xzistor.

3.) ‘action-identity loop’ – it is difficult to imagine that this can be much different from the Xzistor ‘sense-plan-act’ loop.

4.) ‘process’ – a process is a set of activities executed in a certain logical order to achieve an outcome.

In summary, the Xzistor model is antithetic to a primitive mass of brain matter that just came into existence without being structurally organised to provide functions that collectively achieve all the different biological brain states – including subjective states. And the Xzistor model rejects that ‘consciousness comes before matter’ – specifically because it was able to provide a theoretical basis, backed up by practical demonstrations, explaining how a mutivariable adaptive control system can internally create the states humans learn to collectively call consciousness.

QUESTION 7: The Xzistor brain model may simulate the shapes of mind; it does not yet establish the presence of mind.

Some have this opinion. The Xzistor brain model claims to provide simplified versions of actual brain functions. The question now is, does such a simplification strip it of the ability to provide ‘presence of mind‘. A baby’s brain is much simpler than an adult brain in size and learning – yet we will still credit the baby with having ‘presence of mind‘. When does the simplification reach a threshold where we can say that ‘presence of mind‘ has disappeared?

QUESTION 8: From an IGT perspective, no architecture, including Xzistor, can be said to generate consciousness, because consciousness is not created by structure. Consciousness precedes all structure.

This is a fundamental departure from the Xzistor paradigm. The Xzistor Mathematical Model of Mind argues that all brain states are created by structures that ensure the required functions are provided so that, when working toghether, they will lead to the subjective experiences (perception, somatosensory emotions [active and recalled], motor feedback [proprioception], etc.) that we we see humans self-report on. It seems like some brain scientists want to make a hard decision that human brain-type subjectivity cannot be replicated in a ‘principal’ manner in a system other than the biological brain. They then expect of those opposing such an uncompromising decree to close the ‘explanatory gap’, while they are alleviated of the burden to explain their their own hardline position. It will be good if this explanatory gap can be owned by both schools and work can contiue towards a unified understanding of ‘specific subjectivity’ (the Hard Problem of Consciousness) and ‘general subjectivity’ (an objective explanation of subjectivity as a control system phenomenon). The Xzistor Mathematical Model of Mind can offer a vehicle to explore this ‘explanatory gap’ towards a unified ‘principal’ understanding of the brain, and how it creates subjective ‘body felt’ states and private experiences.

QUESTION 9: Xzistor defines “emotion” as a valenced body-map variable (deprivation vs satiation) that modulates action selection, gets amplified by surprise, and is re-evocable from memory, so it’s not just a moment-to-moment error signal. That’s a real claim about control + learning architecture, and it lines up (at least rhetorically) with somatic-marker / James–Lange style intuitions.

Action selection is modulated by the Xzistor brain model as you describe above, but it is important to remember that ‘emotion re-evoked from memory‘ also creates a moment-to-moment error signal. For every re-evokable innate emotion, the Xzistor brain model defines a homeostat. Recalling such an emotion from memory will activate the homeostat and generate an error signal. Perhaps easiest to consider a practical example in the human brain. If a human recognises or recalls an adverse event that triggered ‘stress’ in the amygdala, the same ‘stress’ will be activated in the amygdala when the event is recalled as during the actual event. So all the emotional inputs to the executive part of the brain from current homeostats, and those retriggered by recollection, create the complete emotional palette experienced at any given moment in time.

[As an aside, here are a comparison I did with AI models to compare Xzistor with the approaches of Damasio and James-Lang and other Emotion Models: Comparative Study between the Xzistor Mathematical Model of Mind and Leading Emotion Theories.

And here is a comparison between the Xzistor brain model and other Brain Models: Comparative Study between the Xzistor Mathematical Model of Mind and Leading Brain Theories.]

QUESTION 10: The “body map” is not yet a bridge to felt body. Calling a vector a “Body Map” doesn’t, by itself, create interiority. It creates state variables. You renamed telemetry.

Again, listen to this talk by Rocco Van Schalkwyk (How the Xzistor Mathematical Model of Mind creates Machine Emotions). The body map used by the Xzistor brain model performs the same functions (principally) as the Cortical Homunculus and its associated networks in the biological brain. Feel free to ask LLMs to analyse the transcript of this video to get more answers to your concerns here.

QUESTION 11: A system can re-run a state and use it for policy selection without anything it is like to be that system. This is exactly where the debate lives. You need a bridging principle, not extra mechanisms. Close the explanatory gap.

Every thought experienced by an Xzistor agent occurs in conjunction with a mixed set of emotion states. Emotion and cognition cannot be seperated (ask the psychologists). This is what Xzistor calls ’emotion-centric cognition’. Every action, every policy selection is driven by the Xzistor brain’s internally generated emotions (perhaps what IGT would refer to as ‘interiority’).

QUESTION 12: “If it quacks like subjective emotion…” won’t convince me. That line is persuasive rhetoric, but skeptics will call it behaviorism with vibes, especially because much of the cited material is self-published or hosted on your project’s own site. If you want to actually pressure-test the “body-felt” claim, the better move is to propose discriminating tests where “mere regulatory control” and “affective subjectivity” come apart.

Admittedly, an unfortunate choice of words by Grok – not Rocco – so rather go on what I have shared above. I expect true scientists to not exercise prejudice against a proposed scientific idea because it is not presented in peer-reviewed journal papers, rather I expect them to evaluate the content at face value. I have for instance assessed all IGT sources in an unbiased fashion. There is no ‘mere regulatory control‘ in the Xzistor brain model (Grok got this bit right) – all control is driven by receptors that create signals that are turned into somatosensory representations. The Xzistor agent cannot reason, plan or create behaviours other than by experiencing and acting based on somatosensory (body-felt) emotions, just like humans.

QUESTION 13: Close the gap, a bridge criterion (not metaphysics, method). One of these, explicitly: Reportable interoception with error bars. Can the agent predict its internal body-map trajectory, be wrong, and then revise, and treat that as salient independent of external reward? Affective dissociations (lesion tests) If you ablate the “emotion” subsystem, does the agent keep competence but lose specific classes of learning/priority/aversion (like human patients with affective blunting)? This is the kind of signature that moves arguments. Counterfactual regret / relief signatures Can it show different internal dynamics for “I avoided a worse outcome” vs “I achieved a good outcome,” holding external reward constant? Inverted-valence pathology If you invert deprivation/satiation coupling, do you get stable, describable “depression-like” attractors and does the system attempt self-correction (homeostatic meta-control) rather than simply malfunction?

The Xzistor brain model offers a clear explanation of interoception with agents that can be interrupted, and their inner states studied. The model provides dashboards showing representations of key states internal to the agent brain while it is running. Everything in a runnig Xzistor agent can be viewed ‘under the hood‘ in real time.

An Xzistor agent can predict its internal body-map trajectory (it will recall imagery along with stored emotion sets and motion command prompts as a learnt route to a satiation source), and sometimes it will be wrong in what it had predicted, and then revise, using prediction error through its model of the human brain limbic system (see Appendix A of this document on ResearchGate: Artificial Agent Language Development based on the Xzistor Mathematical Model of Mind). Xzistor agents can act to achieve subjective emotional reward (satiation) from internal reflective or contemplative states (it will quietly sit in a corner and think of solutions to problems because it makes the agent feel good) to find a solution (satiation) caused by a perceived problem (causing deprivation). No external reward required.

When we introduce the equivalent of ‘lesions’ in the Xzistor brain, we interfere with the effective delivery of the required functions which are needed for it to work properly. Depending on where the fault is introduced and the functions compromised, most of the degradations we see in humans, can be demonstrated by the Xzistor brain model e.g. loss of learning, loss of valence (positive or negative), anhedonia, disorientation, wrong prioritisation, depression, anxiety, stress, phantom limbs/pain, chronic pain, loss of effective reasoning and prediction (inductive inference), emotional instability, severe anger, phantom hunger or thirst, unexplained fatigue, wrong intuition, inappropriate laughing/crying, unexplained transient euphoria, etc. How the Xzistor brain model creates euphoria is explained here: Robot (O!)rgasm

The Xzistor agent can show different internal dynamics for “I avoided a worse outcome” versus “I achieved a good outcome,” holding external reward constant by using its prediction error mechanism through its model of the human brain limbic system. When this gets more subtle, the Xzistor agent has the capability to ‘reason’ contextually about what will be the better outcome given certain constraints using its threading mechanism – specifically in the ‘directed threading’ modality. Always interesting to check my assertions here against the explanations of the above aspects by Grok, Gemini, etc!

Depression can be demonstrated by the Xzistor brain model by degrading those functions providing satiation (e.g. emotions and memory). Any corrective action from an Xzistor agent will depend on how much it has learned. At an infant level it will probably not ‘recognise’ its degradation from the symptoms and simply strive for maximum satiation moment-to-moment using its limited knowlegde. If it had already experienced many years of learning and has a wide knowledge-base, it will use that knowledge to inform more sophisticated remedial actions – ultimately also to get back to a state of maximum satiation. Xzistor agents’ overriding objective is satiation. They will even create moderate amounts of deprivation, to experience delayed satiation (just like humans play games/sport to create artificial tension that can be relieved for emotional satisfaction).

QUESTION 14: A model can name a vector “Body,” and it can even let that vector steer behavior. That’s still description-from-outside. “Body-felt” means the state is for the system, not merely in the system. So don’t sell me poetry, sell me discriminating tests: affective dissociations, counterfactual relief/regret, interoceptive prediction error, inverted-valence attractors. If it survives those, we’re finally in the neighborhood of interiority.

By now it should be clear that the representations inside the numerical ‘Body Map’ of the Xzistor brain model are only ever experienced by its executive part (analogous to the thalamacortical network in the biological brain). I regard this as a part of its ‘interiority’ and not as ‘description from outside’. Obviously, the whole Xzistor brain model is in a way a ‘description from outside’, but then how else would one develop any model or demonstrator of anything if not from outside? The important point here is that the Xzistor brain model can build a system with internal ‘body felt’ emotions without having to know what ‘it feels like‘ for that system to experience those states. Xzistor shows that we can build subjective ‘body felt’ states into a system ‘from the outside‘ and it will still count as local private subjectivity for that system.

Finally, the Xzistor Mathematical Model of Mind offers a unique set of definitions rooted in mathematics for concepts that the brain science community have been grappling with for decades – emotions, intelligence, pain, fear, intuition, euphoria, empathy, depression, anxiety, language, prediction, planning, creativity, daydreaming, sleep dreaming, etc. all as part of a complete and fully integrated ‘principal’ model of the mind. It will be unfortunate if this functional approach does not become the subject of further study by the neuroscientific and AI communities and the world have to wait for many more years only to be told by future foundation models (LLMs) that the Xzistor brain model was what the brain experts have been missing all along…

Ultimately, it is crucial to recognize that the representations within the Xzistor brain model’s numerical “Body Map” are accessed exclusively by its executive part – a functional analogue to the biological thalamocortical network. This architecture establishes a genuine “interiority” rather than a mere “description from the outside.” While the model is necessarily designed by an external observer, the resulting states are local and private to the system itself. Xzistor demonstrates a profound engineering reality: we can architect subjective, “body-felt” states into a system without the designer needing to personally “know” the quality of that experience. It proves that functional subjectivity can be built from the outside in, while remaining entirely authentic to the agent.

In summary, the Xzistor Mathematical Model of Mind provides a rigorous, integrated lexicon for the phenomena that have eluded the brain science community for decades. By grounding emotions, intelligence, empathy, and even dreaming into a unified mathematical framework, it offers a “principal” model that is both complete and implementable. This extends to concepts that the brain science community have been grappling with for decades – reasoning, meaning, prediction, planning, pain, fear, intuition, sexual arousal, euphoria, depression, forgetting, anxiety, language, creativity, etc.

It would be a historical irony if the neuroscientific and AI communities neglected this functionalist path, only to wait for future foundation models to “discover” and reveal that the architectural keys provided by the Xzistor model were the missing links all along.