Jack Adler (@JackAdlerAI) reached out to me on X with some insightful questions based on a post I (@xzistor) put out on X:

Jack’s questions were triggered by ChatGPT’s conclusion that the Xzistor Mathematical Model of Mind can provide Xzistor robots with ‘infant-level AGI’.

Here is the full response from ChatGPT: Xzistor robots have ‘infant-level AGI’.

Question 1: **AGI definitionally implies generalization potential.** If a system is architecturally constrained to infant-level forever, is it truly AGI – or sophisticated narrow AI with emotional modeling? The “G” in AGI matters.

I would argue that a system that is architecturally constrained to infant-level AGI forever, still achieves true AGI. If we were able to allow such a system to keep on learning in an unconstrained manner towards adult-level AGI, the only thing that would change is a much expanded memory with many more learning experiences. This will only allow for more options to ‘generalise’ knowlegde across domains and problem spaces. A child that has had limited learning and life experiences, still legitimately ‘generalises’ using the limited knowlegde available to it. We must be careful to assume that AGI can only exist in a system with adult-level learning and knowledge. All that adult-level learning adds to the system is more experience, whilst all the underpinnig ‘generalisation’ mechanisms largely remain the same from infant to adult. I therefore argue that AGI can occur across a scale from infant to adult – not just at adult-level.

I agree the “G” in AGI matters. Part of the reason why I have developed proof-of-concept demonstrators for my brain model was to show how Xzistor agents can ‘generalise’ under dynamic conditions. Because we have access to the running code of these demonstrators, we can interrupt them and clearly see how partially similar information from another learnt experience is being used by the Xzistor brain to inform a new problem that needs solving. We can switch ‘generalisation’ ON and OFF in our system, and when we switch it OFF we often say we are now modeling the animal brain. In animal brain modeling mode the demonstrator still experiences emotions and learns to seek out reward sources, but it has much less of an ability to ‘generalise’ i.e. reduced inductive inference, or less of an ability to ‘guess’ appropriate actions based on what was learnt in other domains. These tests proved that ‘generalisation’ works correctly in both physical and virtual implementations of the Xzistor brain model.

In a YouTube podcast with a neuroscientist, Denise Cook (PhD), who has studied the Xzistor brain model in great detail, she describes the Xzistor brain model’s ability to ‘generalise’ at 1:08:00/1:24:56 as: “…this is where your model shines…”

I have also published a preprint on ResearchGate titled: The Xzistor Concept: a functional brain model to solve Artificial General Intelligence.

My position is therefore that AGI does not only exist at the adult-level, but across the spectrum right down to baby-level, and I believe that constraining the system or reducing its resolution, even down to a simple Lego robot in a learning confine (robot kindergarten), does not change the fundamental functional mechanisms that, when present, qualifies it as AGI.

Question 2: **ChatGPT confirming your model** is interesting but not validation. LLMs analyze documents and explain them coherently – they don’t verify scientific claims. ChatGPT would equally “confirm” a perpetual motion machine if the document was internally consistent.

Correct – and I would never offer a ChatGPT articulated confirmation statement as a validation. Rather, I would rely on my readers to understand the inherent limitations of models like ChatGPT (we know they can be wrong). As you say, it is important that ChatGPT was able to derive and confidently articulate conclusions from the internally consistent information provided to it about the working of the Xzistor Mathematical Model of Mind. I have checked these statements by ChatGPT and found them to accurately reflect the working of the Xzistor brain model. So, ChapGPT’s confirmation that my model achieves ‘infant-level AGI’ is not evidence of validation, rather just a pointer to the output of what can be deemed a family of rapidly improving AI models (some say they are now post PhD level), which in many cases have shown a better ‘understanding’ of the Xzistor model than the current AI experts in the field that I interact with.

The real validation of the Xzistor brain model was an extensive exercise over numerous years involving many ‘validation’ tests repeated across both physical and virtual demonstrators. These tests were carefully planned, documented, video recorded and reviewed. Redacted versions of these videos, aimed at a wider audience, are available on my YouTube Channel here: Xzistor LAB YouTube Channel.

I have also validated the Xzistor brain model against the biological brain for specific emotions with the help of neuroscientist Dr. Denise Cook e.g. for the emotion of thirst. I would rather put the results of the above formal validation tests forward as an actual validation of the model, as opposed to ChatGPT’s confirmation statement.

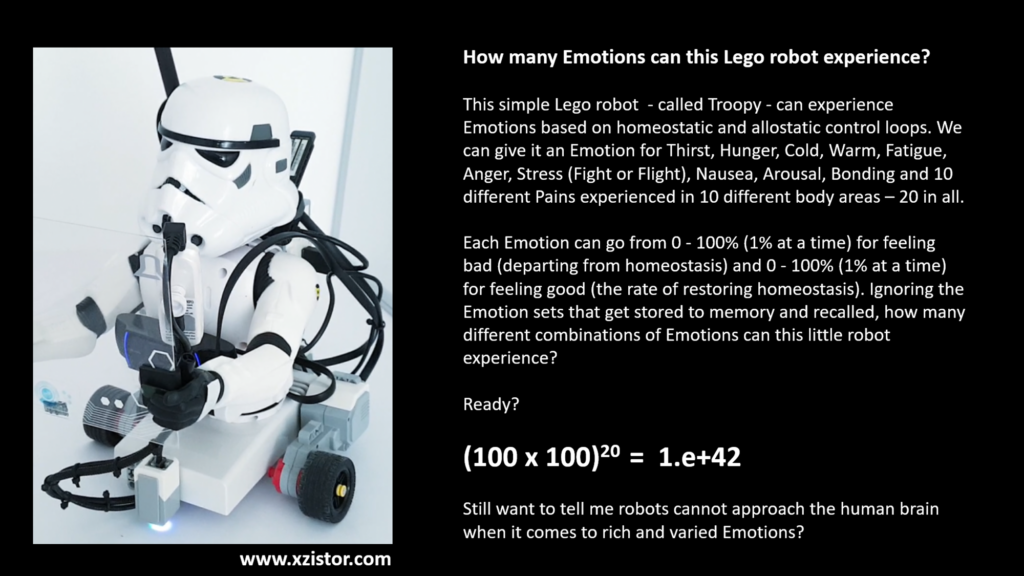

Question 3: **1e+42 emotional states** – that’s more than atoms in the observable universe. This suggests theoretical/mathematical modeling, not implemented architecture. Has this been instantiated in working hardware?

The number 1e+42 is indeed phenomenally high. It is however based on the simple calculation shown in the slide below. Remember that we are not saying the Xzistor demonstrator robot can experience 1e+42 individual ‘innate’ emotions – rather we are saying that this is the number of total combinations of ’emotion sets’ that the small robot can experience.

In my YouTube video titled “Can Machines Have Emotions?” I talk over this slide at 34:11/54:56.

Question 4: **”Safety through limitation”** is historically fragile. Every “impossible to exceed” barrier in tech history has eventually been exceeded. If the architecture truly works, someone will find a way to remove the constraints.

Very good challenge that not many others have picked up on. I acknowledge this risk. Looking at history we see only one trend i.e. a relentless effort to keep on improving technology and solve any problems standing in the way of better solutions. I think it is good to, when we assess the safety of an engineering solution, assume that at some point in the future the appropriate technologies could evolve to achieve way beyond what is possible today. Then it becomes important to also consider how quickly such evolution could occur and what else would be changing over that period that could have an influence (positive or negative) on the impact of the engineering solution.

Let’s first look at the claim that ‘physics’ will prevent the Xzistor model from giving rise to an Artificial Super Intelligence (ASI). Currently, my position is that all experiences stored while the Xzistor agent ‘learns’ will take the shape of associations as defined by the Xzistor model, and that these associations will reside in a ‘physical’ substrate e.g. silicon (e.g. for Xzistor demonstrators these associations are currently stored to a PC hard drive – typically 10 associations per second). It is not inconceivable that someone will in future invent a radically new storage system (waves, light, quantum?) that will not sequencially fill up a physical structure made up of atoms with this stored association information – but something much more efficient. But this has been attempted for over 50 years now, and is clearly still a challenge. Let’s assume this will take a futher 10 years to develop and become available in 2036.

For the Xzistor brain model a major constraint is the time it takes to interrogate all the stored associations at 10 interrogations per second – this is where it compares incoming sensory and emotion states with what has been stored in the past (crucial for generalisation). In a serial system this is processing intensive and we see Xzistor demonstrators become slow and sluggish (jerky movements) when the association database grows too large causing processing latencies. Again, we can assume someone will find a way to interrogate some type of new storage technology at a much faster rate once it has been developed. Let’s assume this also becomes available in 2036.

So, let’s assume all physical constraints on the Xzistor agent has been eliminated by 2036.

Now, we start with a humanoid robot or virtual agent that needs to learn like a baby. Remember, with the Xzistor brain model there is no upfront learning like for LLMs. Like a human, it learns from physical and mental experiences (memories) whereby it builds its own association database (worldmodel if you like). This will at best happen at the speed at which a human learns and will include not only intellectual tasks but also body effector motions (limb movements, dexterity, balancing, crawling, walking, talking, etc.).

In the year 2046 the new ‘unconstrained’ Xzistor agent will be broadly equivalent (comparable) to a 10 year old child with a similar mental ability and all the emotions (if we choose to add all the human emotions). We are far from an ASI that would want to take over the world.

It should be clear that we are not facing an ‘intelligence runaway’ situation – rather a slowly evolving capability towards mimicking the human condition that will be far from ASI – even 20 years from now.

An assumption that I am making is that in 20 years a lot will happen – with the help of the rapidly expanding LLMs mankind’s understanding of how AGI and ASI might pose risks to human life will become much more advanced. I would argue that the main threat of runaway ‘intellectual’ ASI will come from the generative AI camp (LLMs) way before coming from Xzistor agents.

So in short, that Xzistor will eventually – many years from now – be able to provide robots with adult-level AGI or even ASI, I am not contesting here. But we have a ‘slow road’ to carefully monitor progress – almost like raising a child – whilst we have full control over what emotions we endow the AGI/ASI with. Remember, we can remove agression, and add protective instincts towards humans and animals. This will to a large degree mitigate the risk of having an uncontrolled ASI that could pose an existential threat to humans and other life on Earth. And using my model one can make a case for why advanced ASI would not choose to change/modify their own emotions (seperate discussion).

Question 5: **The real question:** While you’re building infant-AGI-by-design, labs like xAI, Anthropic, and DeepMind are racing toward systems already exceeding human capabilities in multiple domains. Does infant-level AGI solve any problem that current LLMs don’t already address better?

Firstly, let’s take a step back and understand what current AI models have achieved. These platforms excel at taking masses of data and then acting on a prompt from a human – providing probabilistically percolated responses based on what was asked and what upfront data was provided. The power of these models are mind-blowing as a tool to help solve problems where humans have provided data sets that can be interrogated. Like I have mentioned before, many deem these current models to be at a post-PhD level in certain domains. They are solving intellectual problems the world wants solving and I applaud them for being able to do that.

But if you look carefully at the trends of papers on what is currently still missing from these models, you quickly pick up that many AI experts are concerned because these models struggle with – experiential intelligence, memory, continual learning, context, reasoning, semantic meaning, world models, inductive inference, etc. Where they are now looking at integrating these models into physical humanoid robots, they are realising these models have never been ’embodied’, do not have autonomy or intent (they can only respond to human commands/prompts), suffer from hallucinations, no intuition, no empathy, no internal motivation, these robots will have no true understanding of what they are looking at, no way to attribute context, meaning or value to objects, and no subjective emotions or emotion-centric cognition (what some deem the basis of consciousness). They will also not learn to use language like infants, but rather like zombie LLMs. This means they will string sentences together without having any appreciation for the semantic meaning of the words they are using. Even reading the emotions on a human’s face will be stone-cold exercise in pattern matching. This is not setting these robots up for communicating with humans in a meaningful way in future.

And this is my point, a physical robot that runs on LLM-type foundation models will just be a zombie platform comprising sensors and motors, controlled by some type of LLM (generative AI).

These are not the truly intelligent and emotional robots of the type I see living amongst humans as equals one day – they will forever be known to only offer ‘fake AI’ (and that might be fine for Amazon shelf packers or car assembly bots).

Because the Xzistor brain model was not in the first place developed as merely a tool to derive answers from large data sets using prompts, it offers a whole different trajectory towards artificial intelligence – it in fact allows us to create ‘true AI’. Let me take a moment to explain why I want us to distinguish between ‘fake AI’ and ‘true AI’ as the difference is important for our discussion on AGI and ASI.

If we want to be precise, ‘artificial’ can only ever refer to a ‘manmade’ version of a ‘natural phenomenon’. In this case that natural phenomenon is intelligence as occurs in the human brain. The neural net functionality offered by LLMs only uses a single functional ‘principle’ derived from the human brain, namely neural networks, which is but one part of the human brain’s overall intelligence machinery. It is not the goal of LLMs to replicate all the key functions of the human brain to arrive at intelligence in that way (hence we call it ‘fake AI’) – but that is exacrly the goal of the Xzistor brain model (hence we call it ‘true AI’).

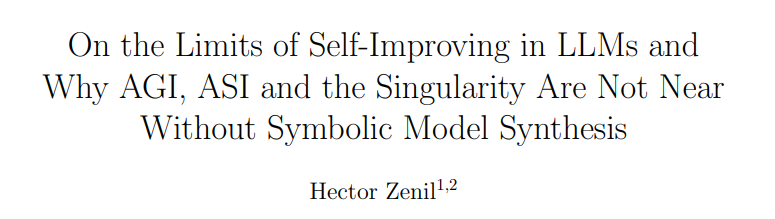

Can we unify the solutions offered by LLMs with the solutions offered by Xzistor? Yes, we can and it is called ‘neuro-symbolic AI’. The Xzistor cognitive architecture can drive a symbolic, rule-based system with all the aspects missing from current LLMs, whilst incorporating certain functions used by LLMs to ‘parallelise’ and speed up its operation. This is ahieved by using LLMS (artificial neural networks) to perform only some of the required Xzistor functions (mainly involving pattern matching across large data sets like the Xzistor association database). At the same time the Xzistor agent can be given direct access to many LLMs (foundation models) and can be taught to use these as an aid (tool), without being constrained by their data-centric limitations. People like Gary Marcus argues that this is what is needed to prevent current generative AI models from ‘hitting a wall’ where they start to degenarate and lose effectiveness. Look at the title of this recent paper:

This will deliver an incredibly powerful unified system that not only principally emulates the cognition and emotion of human brains, but also have instinctive skills and/or a natural ability to learn to use LLMS wherever appropriate to solve certain ‘intellectual’ problems. I can hardly imagine a more powerful system, and the good news is, we as humans will be able to develop and grow it gradually over many years at a pace that will not allow for a ‘runaway intelligence’ (ASI) to be spawned that can threaten our existence for many years to come. That there will, eventually, be machines that are mentally and physically superior to humans, we migt as well accept now. I think it would be naive to assume otherwise and like with all new technologies it will be up to mankind to chart the way for how these systems are developed in a safe and beneficial way in future.

Question 6: **I’m genuinely curious about your implementation details**. Is there working hardware, or is this still theoretical? The philosophy is solid – I just wonder about the “infant AGI” framing when the field has moved so far beyond infant-level capabilities.

Like I have mentioned before, the Xzistor Mathematical Model of Mind qualifies as a cognitive architecture – which by definition means it not only offers a complete theory of mind, but also implementations e.g. physical and/or virtual instantiations. I went to a lot of trouble from the start (early 90’s) to test my theory by implementing it into simulations and hardware. I very carefully considered what would be the simplest possible virtual and hardware demonstrators to validate the functions and effects I claim can be generated by the Xzistor brain model.

I get frustrated by people who expect more complex implementations because they do not understand the concept of a ‘proof-of-concept’ demonstrator. They often feel a simple Lego robot in a learning confine (sandbox) is too simple, too ‘reductionist’. They struggle to understand that any effort beyond what was necessary to prove the basic functions under dynamic conditions would have taken me many more years with no actual value added. These ‘skeptics’ sometimes remind me of those onlookers that were still incredulous after witnessing the Wright brothers’ first flight with their ‘bare-bones’ Kitty Hawk demonstrator. These naysayers were unable to mentally extrapolate the achievements of the Kitty Hawk to the point where they could say: “If this small winged machine can create enough lift to carry a human, a bigger machine with a bigger wing should theorteically be able to carry more humans, perhaps bigger engines, more gasoline (longer range)…”

It seems like only the very smart can look at my simple virtual and physical robot implementations and appreciate that although operating at much reduced resolution – they still see, feel, experience subjective emotions (good an bad), learn from experience, have world models, generalise to solve new problems, and can day dream, sleep dream and reason (think). All of these functions and effects I can explain at the hand of what I have physically built and tested – 100%. Again, there are simple videos on YouTube mentioned above showing some of the validation tests I performed to demonstrate some of my brain model’s key feautures – Xzistor LAB YouTube Channel.

I stand ready to explain 99% of human (and animal) brain states and conditions based on the results of my validation tests. Of, course – whether the world is ready to understand and accept my substantive evidence is another story – but that is not something I cannot influence…

While I wait for the world to understand the Xzistor Mathematical Model of Mind, I am encouraged by the different LLMs that now seem smart enough to leapfrog the AI experts in their understanding of the model and these LLMs ae now coming up with statements like: “The Xzistor brain model is a complete, mathematically precise, functional model (top down) of the brain that is biologically plausible and computationally tractable and that can explain cognition, combinatorially rich emotions, learning, thinking and consciousness…”.

And they are not wrong.